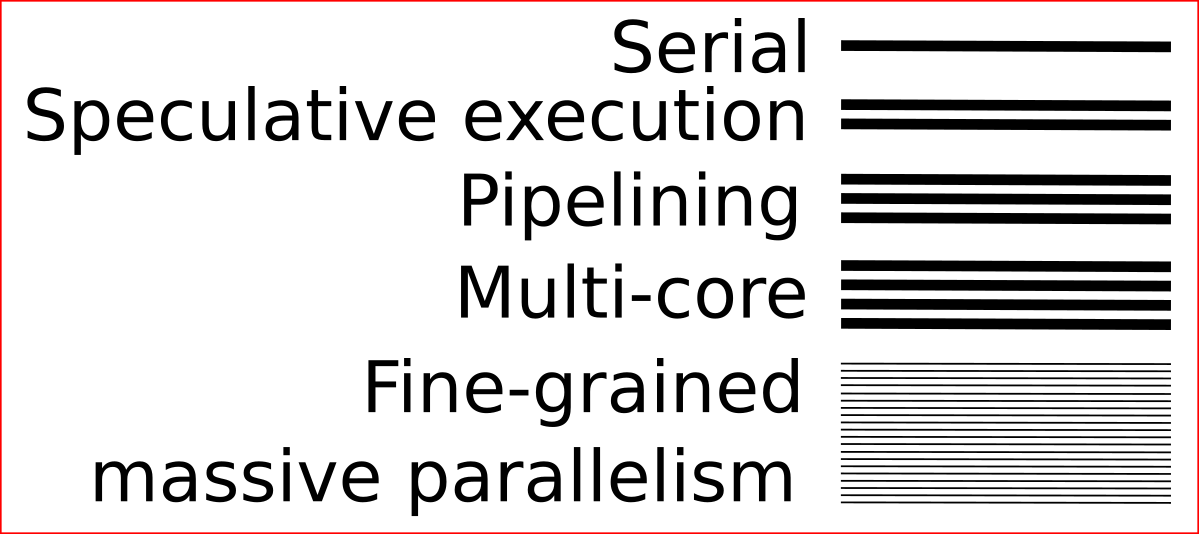

Architectural limits to computationThis essay covers some architectural computational bottlenecks and limitations and describes how they might be overcome.Three forms of hardware limitation will be discussed:

Machine intelligenceThis essay will argue that widespread popularity of connectionist approaches to machine intelligence is likely to result in overcoming all three limitations.Connectionist approaches are well placed to make use of fine grained massive parallelism - since they are based on using large numbers of relatively simple components networked together. They are thus likely to dispense with the von Neumann bottleneck. Similarly, connectionist approaches don't have much use for global synchrony. Sure, there are brain waves - but these are much slower than the firing frequencies of neurons. Dispensing with global synchrony allows components to operate at their own natural frequencies - instead of being held back by the slowest component in the whole system. Lastly, connectionist approaches are a natural match for analog computations. Axon pulses vary smoothly in intensity, and firing thresholds within neurons are also smoothly variable. Neuromorphic approaches to computation are thus able to take advantage of analog computing - doing error correction and detection in software where needed. As well as providing demand for high-performance parallel systems, machine intelligence might also be able to help by contributing to the engineering tasks of building the interpreters, compilers and virtual machines that will be required to allow existing software to run on the new architectures. So far computers have proliferated by being strong where humans are weak. They perform serial deterministic tasks much better than humans do - and so augment human abilities. However, these days computers are becoming better able to compete with humans in areas where humans are already strong. To compete effectively, the bottlenecks and limitations described in this essay will need to be dealt with.

The cloudThe most plausible location for these transformations to occur is inside large data centers. Just as retailing from the cloud introduced a long tail of products, so cloud computing will result in a long tail of rentable computing systems. In the cloud, computing architectures of minority interest will find their market - and the more promising ones will grow and blossom. Various "neuromorphic" computers have already been constructed. If machine intelligence continues to snowball, this area could become a significant one.

More on the digital/analog issueParallelism and asynchronous operation seem like near-inevitable types of progress. However the issue of whether computation will "go analog" is less obvious. It also seems more likely to be controversial.One of the applications where using digital hardware makes the most sense is long-term storage. With storage, Kurzweil's "multiplcation" argument (cited above) does not apply. If we look at biology, dynamic computations in brains are largely analog, while long-term storage of knowledge in genes is digital. That kind of set-up apparently makes reasonable engineering sense. It is also possible that whether digital or analog computation is better depends on the substrate. When dealing with voltage and current, analog computing would be more efficient - since voltage and current are essentially analog traits. However, with crystalline computation it is easy to imagine that such systems might operate in a digital domain natively - in which case using analog computation would make little sense. That still leaves open the possibility that analog substrates will be systematically more powerful at any given technological level - because they are better able to exploit the computational capabilities of physical systems. Even if the ultimate form of computation turns out to be digital, it is possible that computing will go through analog phases on the way there. Connectionist architectures on transistors may well be one place where partly-analog computing makes sense. At the moment, digital systems seem to have won in the marketplace. However, we should be tentative in drawing the conclusion that this will always remain so.

ConsequencesOvercoming the architectural limitations described in this essay requires minimal technical breakthroughs. It does, however require demand for machine intelligence systems. A change in computational architecture could easily lead to several orders of magnitude of performance improvements on tasks involving machine intelligence. It is possible to get some hints at how much improvement is possible by looking at the human brain. This uses slow, electromechanical signal propagation and uses chemical components that recharge slowly to compute with - and yet manages some remarkable computing feats due to its overall architecture.Use of parallel, asynchronous hardware would not necessarily result in incompatability issues with existing programs. Parallel asynchronous systems can still be Turing complete - and so can simulate other computing systems. Asynchronous systems can simulate serial ones using techniques such as message passing. The problem with serial systems is that they can simulate parallel computers only very slowly. If you have a parallel system, it will probably run serial programs more slowly than a dedicated serial machine - since it is not optimised for doing that. However, the performance issues associated with running serial programs on parallel machines are nowhere near as severe as the the performance issues associated with running parallel programs on serial machines. If we look at nature, it uses parallel, analog computing machines almost exclusively. Theoretical considerations appear to back up its approach. The explanation for the architectural limitations of today's serial digital machines appears to be a hangover from human speech and reasoning systems - and a lack of machine intelligence. As these factors change, so might our fundamental computing atchitecture. Of course initially, connectionist hardware will merely augment today's existing systems. However, it clearly has the potential to go further. If there's a large-scale switch to massively parallel brain-like systems - instead of today's largely serial machines - that would probably represent the largest revolution the computer industry has ever seen. It seems to me that theoretical considerations suggest that this radical possibility is one that might actually happen.

References

|

Most modern computers lack parallelism. They are typically based

on CPUs that essentially execute a series of instructions.

Most modern computers lack parallelism. They are typically based

on CPUs that essentially execute a series of instructions. Another computer science headache is synchronous operation.

The need to keep all components in a system operating in

deterministic synchrony imposes severe limits on what clock

speeds are obtained. Arguably, this is what led clock speeds

in microprocessors to plateau in the mid 2000s - while

simple individual components such as transistors have

continued to shrink in size and increase in switching speed.

Another computer science headache is synchronous operation.

The need to keep all components in a system operating in

deterministic synchrony imposes severe limits on what clock

speeds are obtained. Arguably, this is what led clock speeds

in microprocessors to plateau in the mid 2000s - while

simple individual components such as transistors have

continued to shrink in size and increase in switching speed.