Wirehead analysis

Here we will be giving an analysis of the

wirehead potential

of the systems we sketched on the cybernetic

diagrams page.

A couple of ways of avoiding

the wirehead

problem have been proposed:

- One involves ensuring the system uses its current utility

function to evaluate the consequences of modifying its utility

function - and then reject such actions.

- Another involves the idea of giving the system long-term time horizons -

ensuring that it can see the negative long-term consequences of wireheading on

its survival potential - and thus its ability to attain any kind of goal.

The second approach seems as though it isn't likely to completely

prevent wirehead-related behaviour to me. Partial wireheading may be possible

without compromising survival attributes. Also, if one big organism can be

formed, that agent may face little competition for a very long time - and

so face relatively little in the way of direct survival threats.

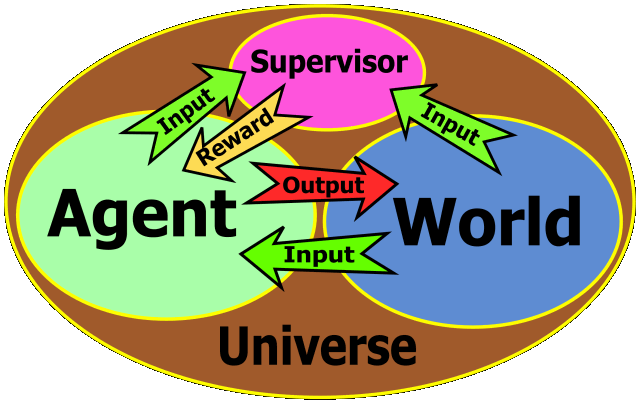

Reinforcement learning system

A diagram of a conventional reinforcement learning system looks something like this:

|

Wirehead analysis:

This system is likely edit its own supervisor, to feed it pure pleasure.

|

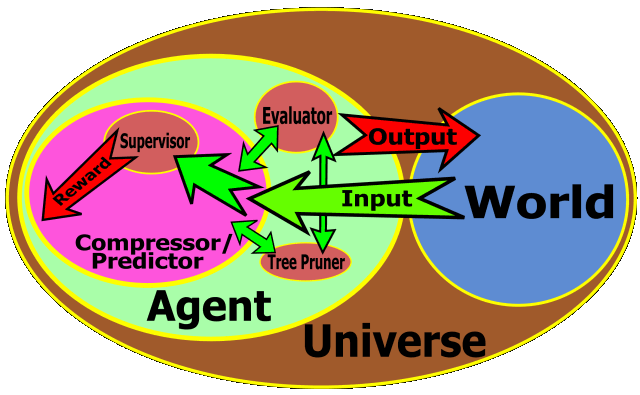

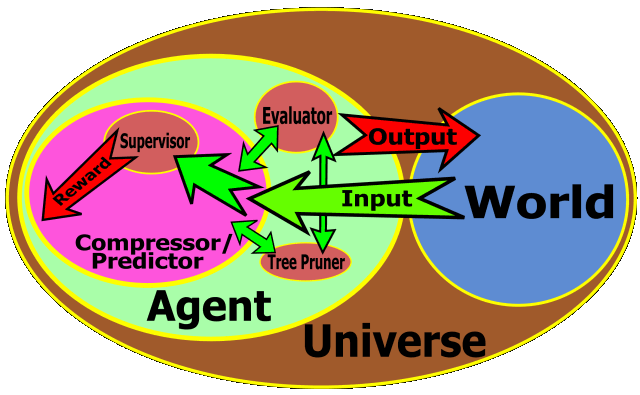

Compression-based system - with exploded compressor

A diagram of a compression-based agent with the details of the compressor is shown below:

|

Wirehead analysis:

This system contains two components, both of which could potentially

behave like wireheads.

- The compressor/predictor component is rewarded for compressing its model

of the world and/or making correct predictions. In either case, it is rewarded

not only for making correct predictions about the world, but also for

making the world more predictable. This is a kind of fake utility - and seems

to be a potential undesirable side effect. The compressor/predictor component

controls the world only very indirectly - but it may still find a way

of wireheading itself.

- Unless it is carefully designed not to do so, the evaluation component is

may also wirehead itself - by direct self-modification. It may also find a way

of synthesizing fake utility.

Carefully designing the evaluatation component - so it considers the

consequences of changes to the evaluatation function in terms of the

current evaluatation function - may mean that this kind of

system could avoid wireheading itself at that level.

|

Alas, I think we really need a better way to experiment with these kinds of

system before we can say with much confidence how they are likely to behave

in circumstances where they can modify themselves.

Tim Tyler |

Contact |

http://matchingpennies.com/

|