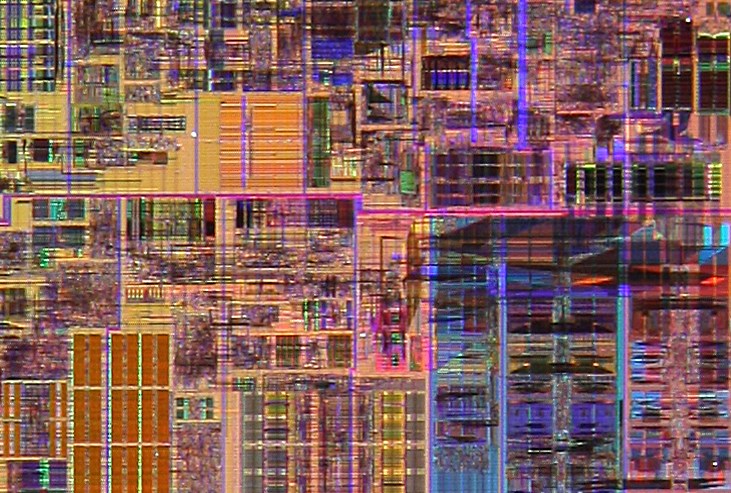

Universal BrainsUniversal Brains and universal computersPhysical approximations to universal computers have become popular devices. Such computers are programmable devices characterized by a specified number of binary inputs and outputs. They are characterized by their I/O frequencies, their memory capacity, their compute speed and their ability to perform tasks in parallel. In practice there are some other variables as well. Computers may also differ in their relative performance on floating point arithmetic, graphical processing functions - and so on.We could also create physical approximations to universal brains. These would be optimization machines. As with universal computers there would be an array of I/O a memory capacity, a compute speed - and so on. The basic idea would be to create a modular learning component that could be reused in a variety of circumstances. So: you could unplug the brain of your electronic dog, plug it into your electronic cat and have everything up and running again fairly quickly. Since we already have universal computers - and they can simulate any other sort of computer, it may not be obvious why we need universal brains. The main problem with most existing computers is that many of them have poor quality architectures. They are often very poor at performing parallel processing tasks, and have hard-wired error detections and correction strategies which in turn impose performance limitations. A universal brain could avoid these problems. Brain-like computing hardware is likely to have two possible configurations. One would be as a co-processor - an addition to an existing computing system. The other would be as a stand-alone system - as a dedicated combined processing and memory unit.

Cloud computingThe place where we most urgently need hardware for machine learning is the cloud. That is where a lot of machine learning services run. Until recently there were not too many options in this area. What options there were mostly involved GPU-rich machines. However in December 2016, Amazon Web Services launched their first machine with some decent parallel hardware.

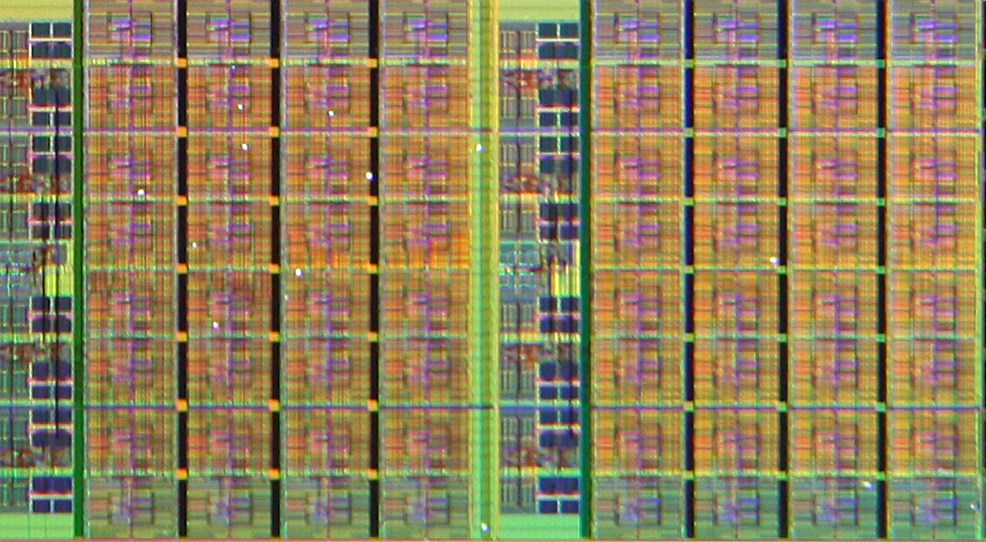

FPGAs

FPGAs are very general purpose. They ought to be a lot better than using GPUs to provide acceleration - but they might not be optimal for machine learning. Instead, they are optimized for a rather different problem: rapid prototyping of ASICs. There, you have to be able to run lots of different circuits efficiently, and reprogramming is not very time critical. People tend to use ASICs for specific applications because they are faster and cheaper if you don't need the flexibility of a FPGA. We will have to wait and see how many people take advantage of Amazon's new parallel hardware in the cloud. However, in principle, the availability of rentable parallel hardware should be a boon to those building machine intelligence systems.

ASICs

Machine-written programsIt could be that what we want in the short term is ASIC hardware for accelerating deep learning applications. However, once computers are writing the programs, they ought to be able to handle parallel programming better than humans can. In which case they ought to be able to design circuits, rather than serial instruction streams. In which case maybe something like a FPGA will be the best target hardware for them. In that case we might want the "partial reprogrammability" feature - that allows the chip to be reprogrammed a bit at a time.

Future prospectsBrains need software as well as hardware. However, having suitable hardware available is a good first step.Hardware for deep learning systems might seems like a small and specialist application domain. However, as I have argued in another essay, machine intelligence could be the application domain eventually that results in breaking the back of the Von Neumann architecture that has dominated the computing landscape for a decade. This whole area could turn into an important one.

References

|